# R CODE

fit1 <- glm(y~yearMinus2000 + dailyrainfall + sin365 + cos365, data=d, family=poisson())

residuals(fit1, type = "response")1.1 Introduction

There are two important definitions in this course:

- Panel data

- Autocorrelation

Panel data is a set of data with measurements repeated at equally spaced points. For example, weight data recorded every day, or every week, or every year would be considered panel data. A person who records three weight measurements randomly in 2018 would not be considered panel data.

When you have panel data, autocorrelation is the correlation between subsequent observations. For example, if you have daily observations, then the 1 day autocorrelation is the correlation between observations 1 day apart, and likewise the 2 day autocorrelation is the correlation between observations 2 days apart.

In this course we will consider 5 scenarios where we have multiple observations for each geographical area:

- Panel data: One geographical area, no autocorrelation

- Panel data: One geographical area, with autocorrelation

- Not panel data: Multiple geographical areas

- Panel data: Multiple geographical areas, no autocorrelation

- Panel data: Multiple geographical areas, with autocorrelation

Note, the following scenario can be covered by standard regression models:

- Multiple geographical areas, one time point/observation per geographical area

1.2 Method summary

1.2.1 Panel data: One geographical area, no autocorrelation

// STATA CODE

glm y yearminus2000 dailyrainfall cos365 sin365, family(poisson)1.2.2 Panel data: One geographical area, with autocorrelation

// STATA CODE

glm y yearminus2000 cos365 sin365, family(poisson) vce(robust)# R CODE

fit <- MASS::glmmPQL(y~yearMinus2000+sin365 + cos365, random = ~ 1 | ID,

family = poisson, data = d,

correlation=nlme::corAR1(form=~dayOfSeries|ID))

r <- residuals(fit1, type = "normalized")

pacf(r)1.2.3 Not panel data: Multiple geographical areas

// STATA CODE

meglm y x yearMinus2000 || fylke:, family(poisson)# R CODE

fit <- lme4::glmer(y~x + yearMinus2000 + (1|fylke),data=d,family=poisson())1.2.4 Panel data: Multiple geographical areas, no autocorrelation

// STATA CODE

meglm y yearminus2000 cos365 sin365 || fylke:, family(poisson)# R CODE

fit <- MASS::glmmPQL(y~yearMinus2000+sin365 + cos365, random = ~ 1 | fylke,

family = poisson, data = d)

r <- residuals(fit1, type = "normalized")

pacf(r)1.2.5 Panel data: Multiple geographical areas, with autocorrelation

// STATA CODE

meglm y yearminus2000 cos365 sin365 || fylke:, family(poisson) vce(robust)# R CODE

fit <- MASS::glmmPQL(y~yearMinus2000+sin365 + cos365, random = ~ 1 | fylke,

family = poisson, data = d,

correlation=nlme::corAR1(form=~dayOfSeries|fylke))

r <- residuals(fit1, type = "normalized")

pacf(r)1.3 Identifying your scenario

1.3.1 Step 1: Do you have panel data?

This step should be fairly simple. If your data has equally spaced time intervals between them, you have panel data.

1.3.2 Step 2: Do you have multiple geographical areas?

Again, fairly simple, just look at your data.

1.3.3 Step 3: Do you have autocorrelation?

Firstly, you must run a model pretending that you do not have autocorrelation.

You then inspect the residuals from the model and see if autocorrelation exists. This is done with two statistical procedures: pacf (for autoregressive models, the most common type of autocorrelation), and acf (for moving average models, a less common type of autocorrelation).

1.3.4 AR(1) data

y <- round(as.numeric(arima.sim(model=list("ar"=c(0.5)), rand.gen = rnorm, n=1000)))With autoregressive data, a pacf plot contains a number of sharp significant lines, indicating how many subsequent observations have autocorrelation. i.e. if one line is significant, it means that each observation is only correlated with its preceeding observation (AR(1)). If two lines are significant, it means that each observation is correlated with its two preceeding observations (AR(2)). The following plot represents AR(1) data.

pacf(y)

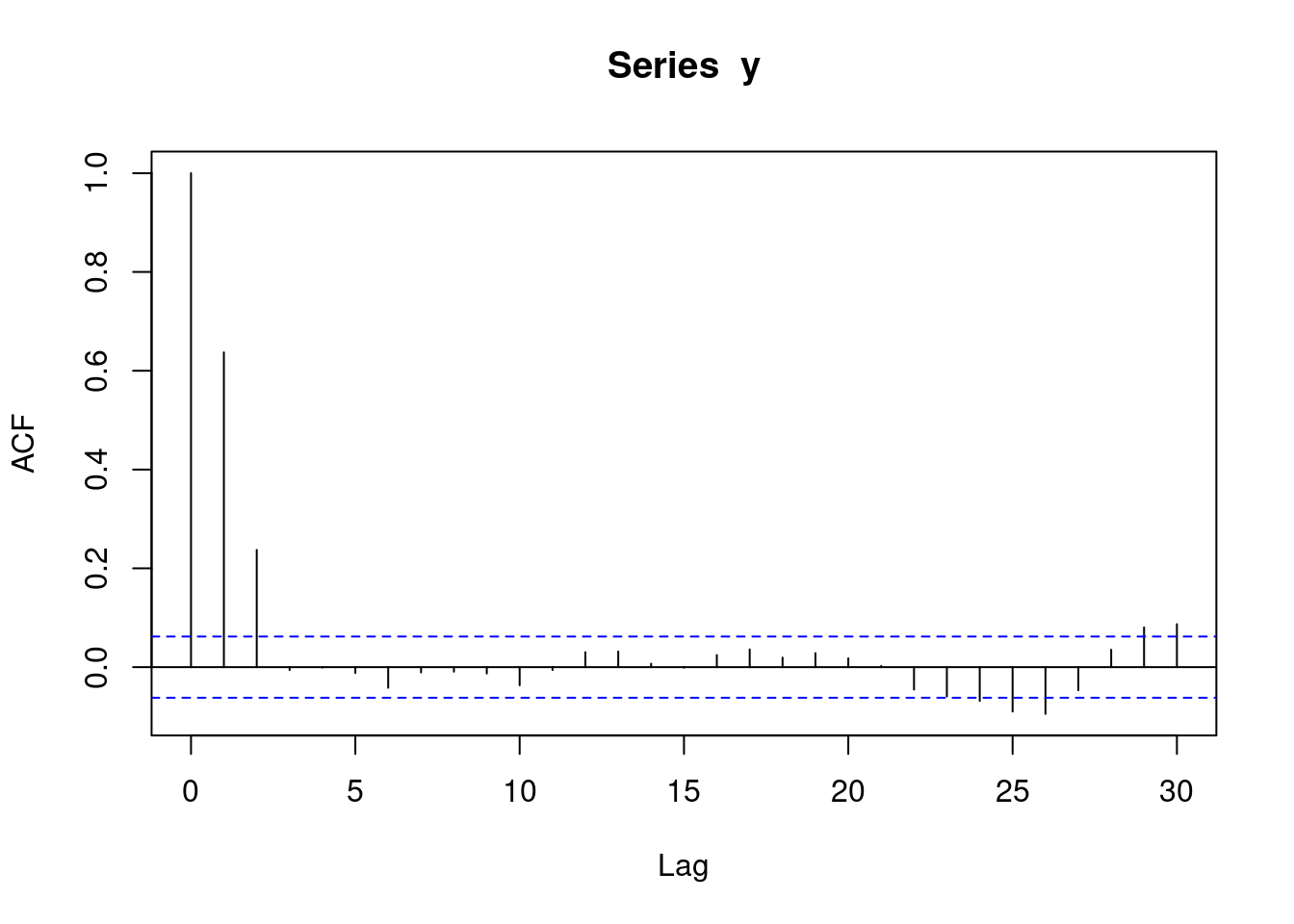

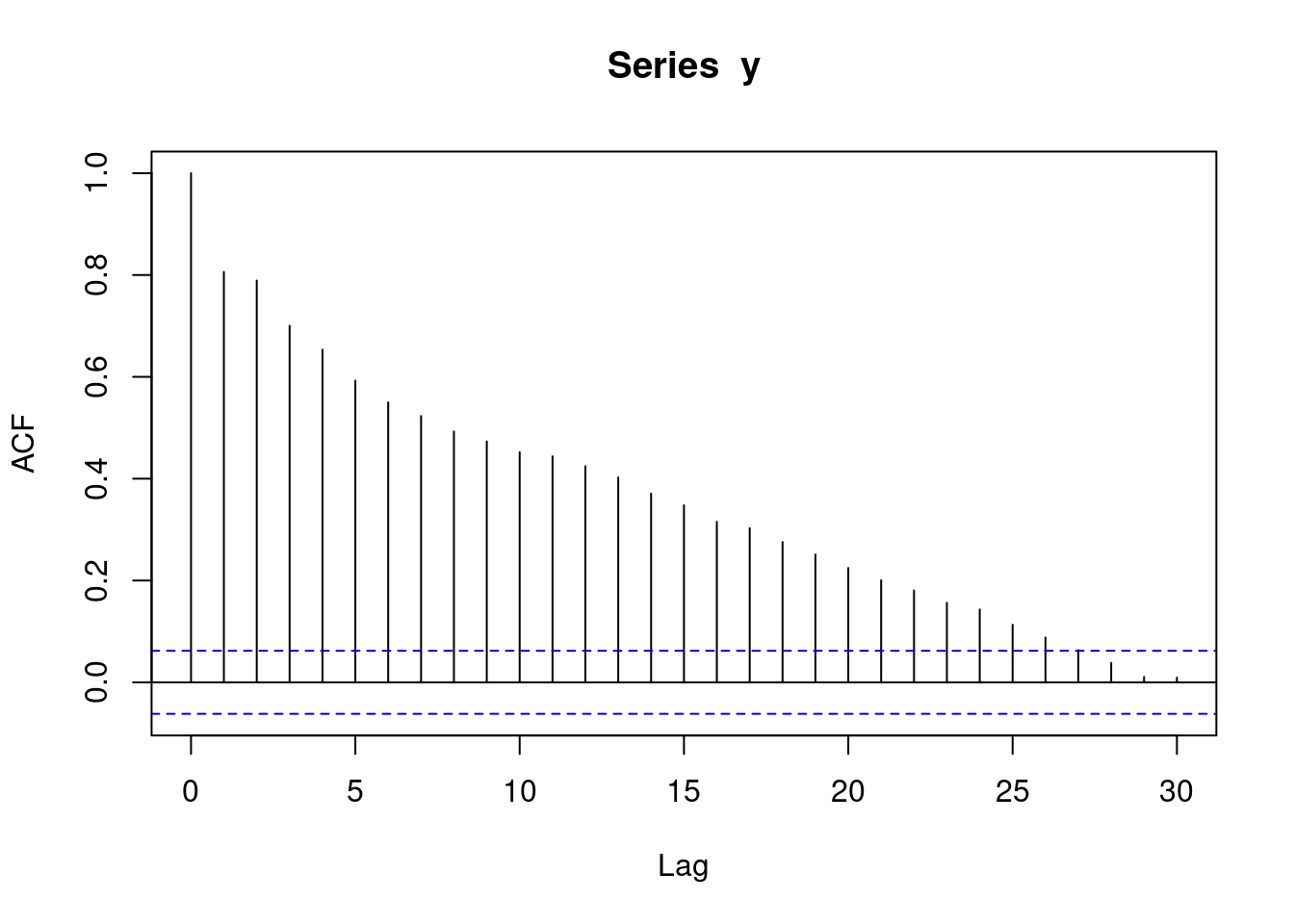

With autoregressive data, an acf plot contains a number of decreasing lines. The following acf plot represents some sort of AR data. Note that the acf plot displays lag 0 (which is pointless and can be ignored), while the pacf plot does not.

acf(y)

1.3.5 AR(2) data

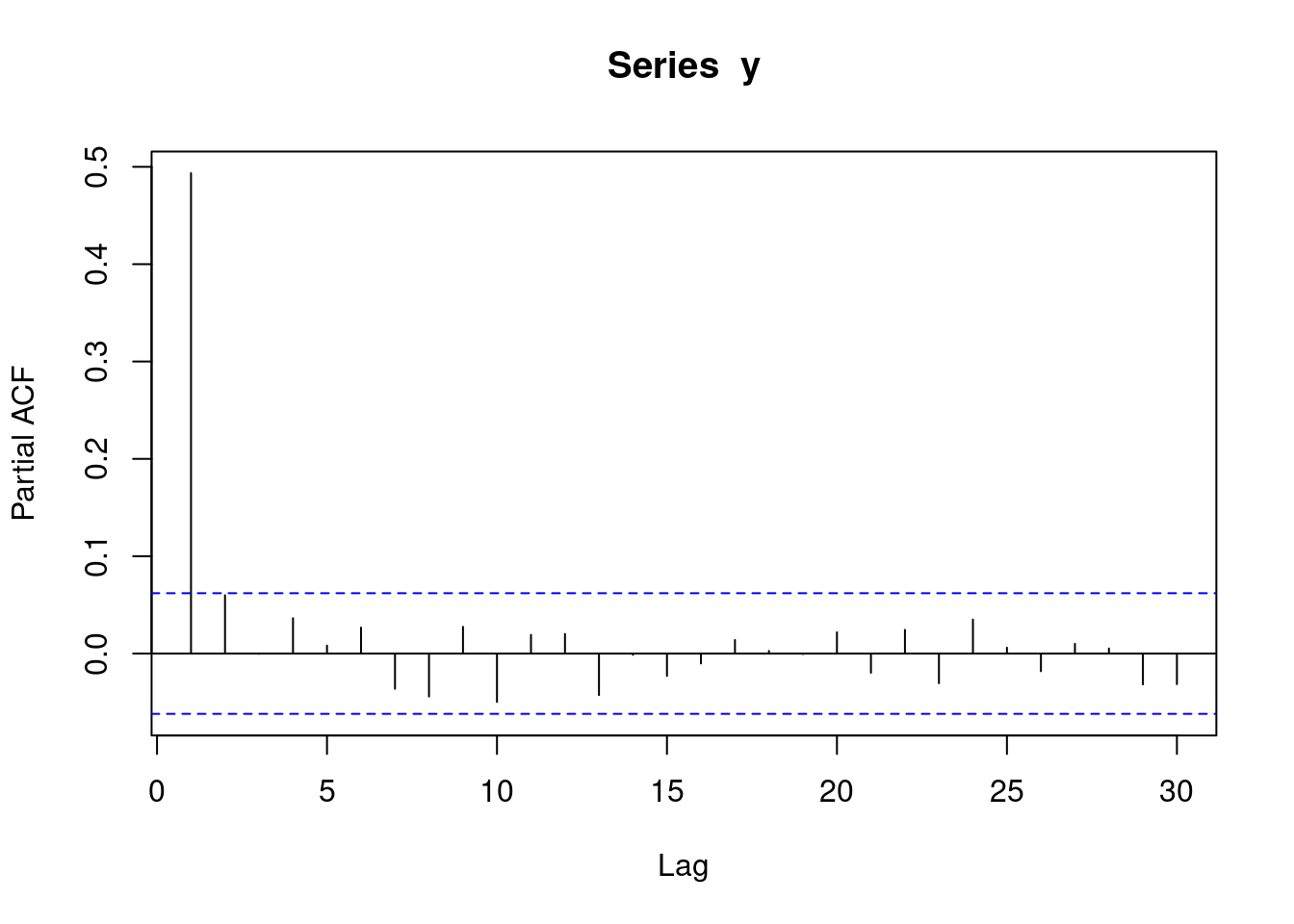

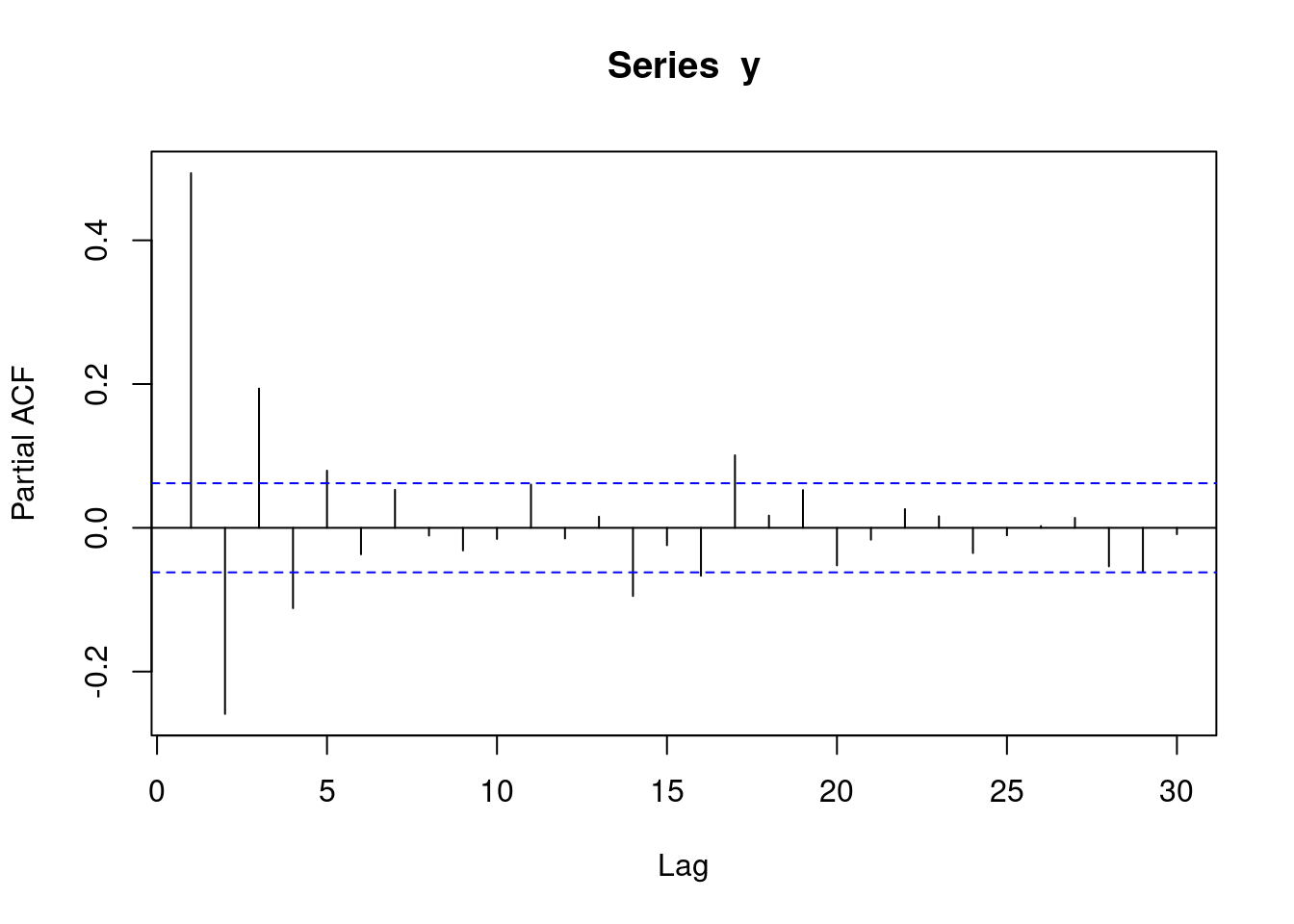

y <- round(as.numeric(arima.sim(model=list("ar"=c(0.5,0.4)), rand.gen = rnorm, n=1000)))The following pacf plot represents AR(2) data. This means that each observation is correlated with its two preceeding observations (AR(2)).

pacf(y)

The following acf plot represents some sort of AR data:

acf(y)

1.3.6 MA(1) data

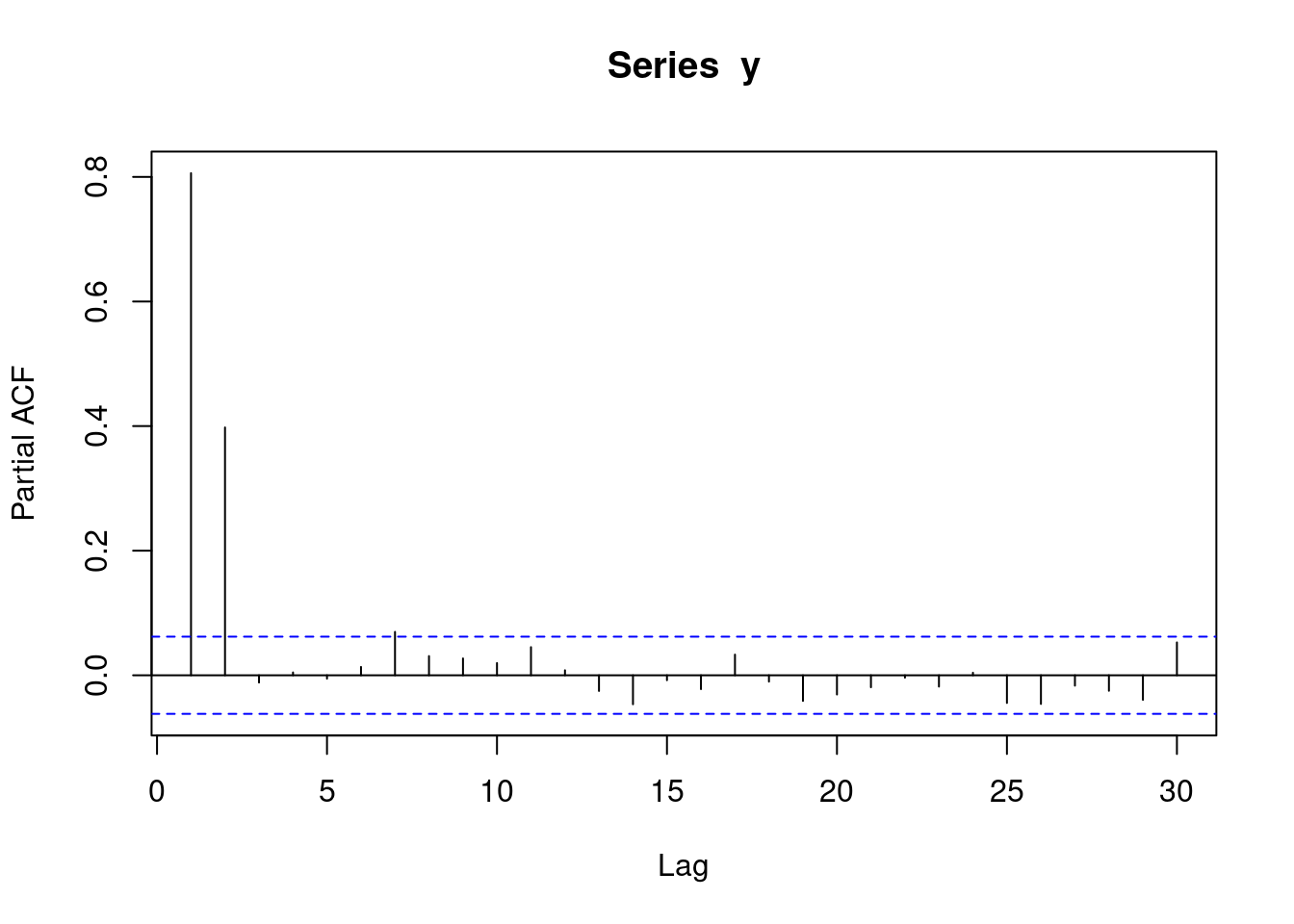

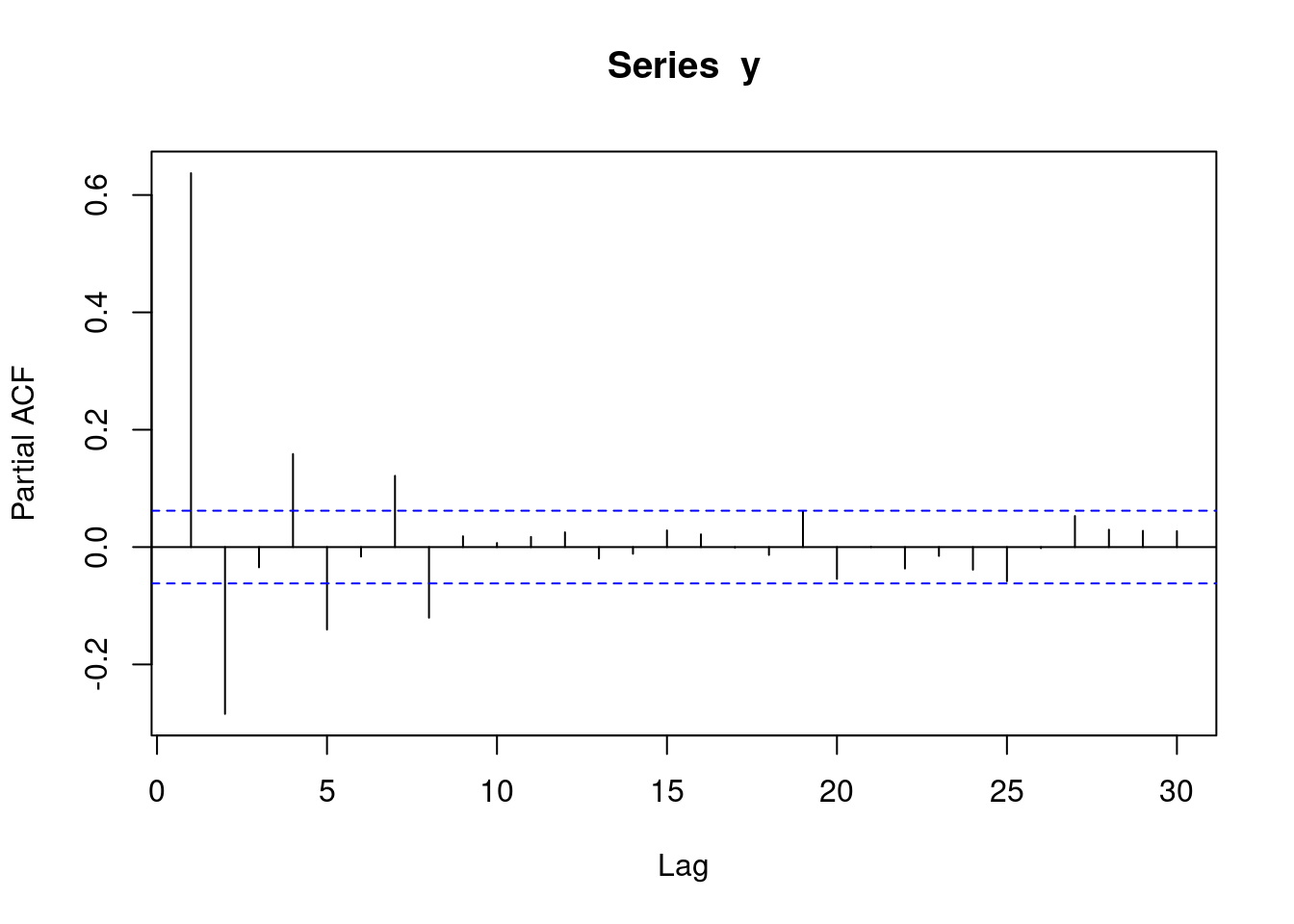

y <- round(as.numeric(arima.sim(model=list("ma"=c(0.9)), rand.gen = rnorm, n=1000)))With moving average data, a pacf plot contains a number of decreasing lines. The following pacf plot represents some sort of MA data.:

pacf(y)

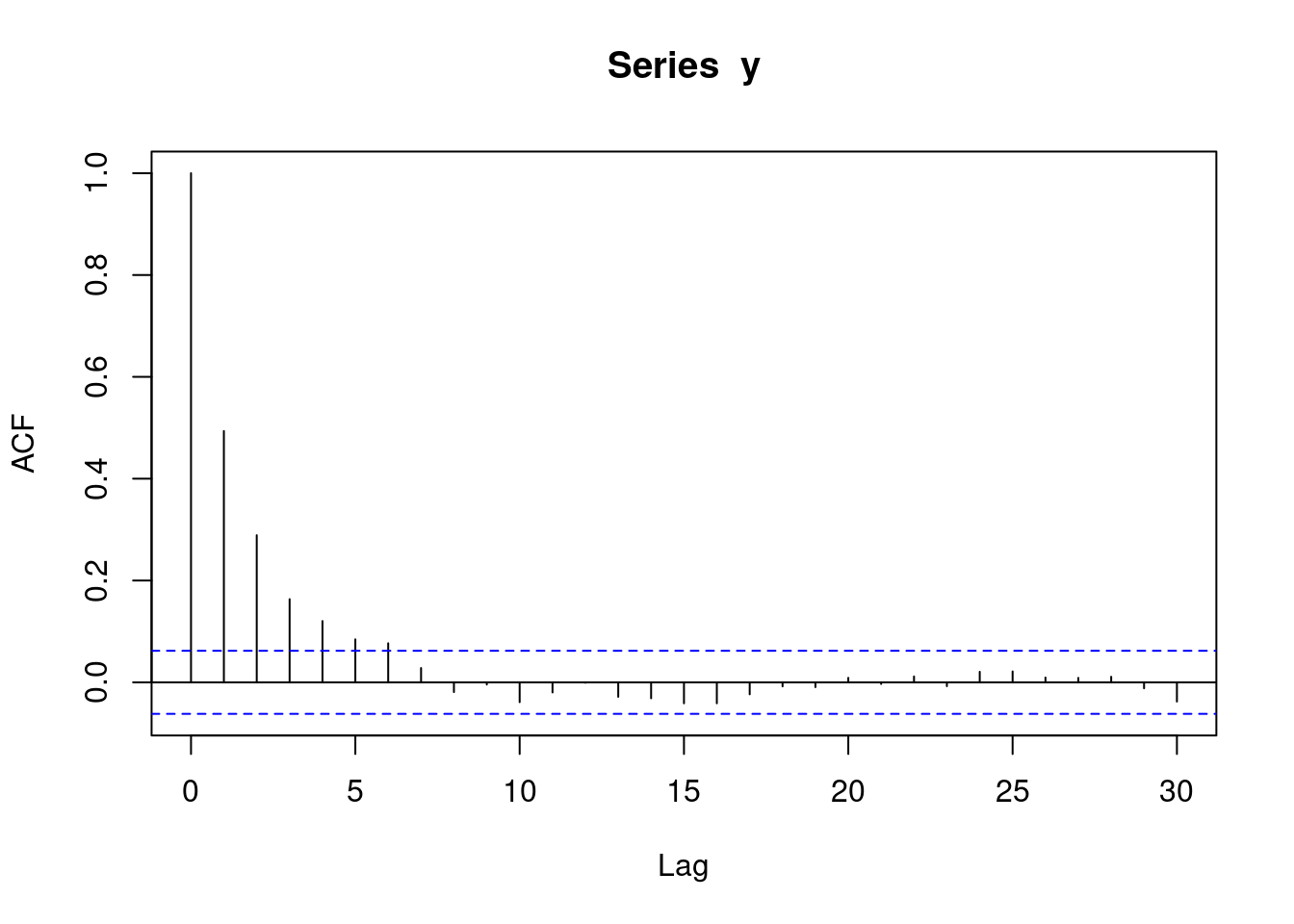

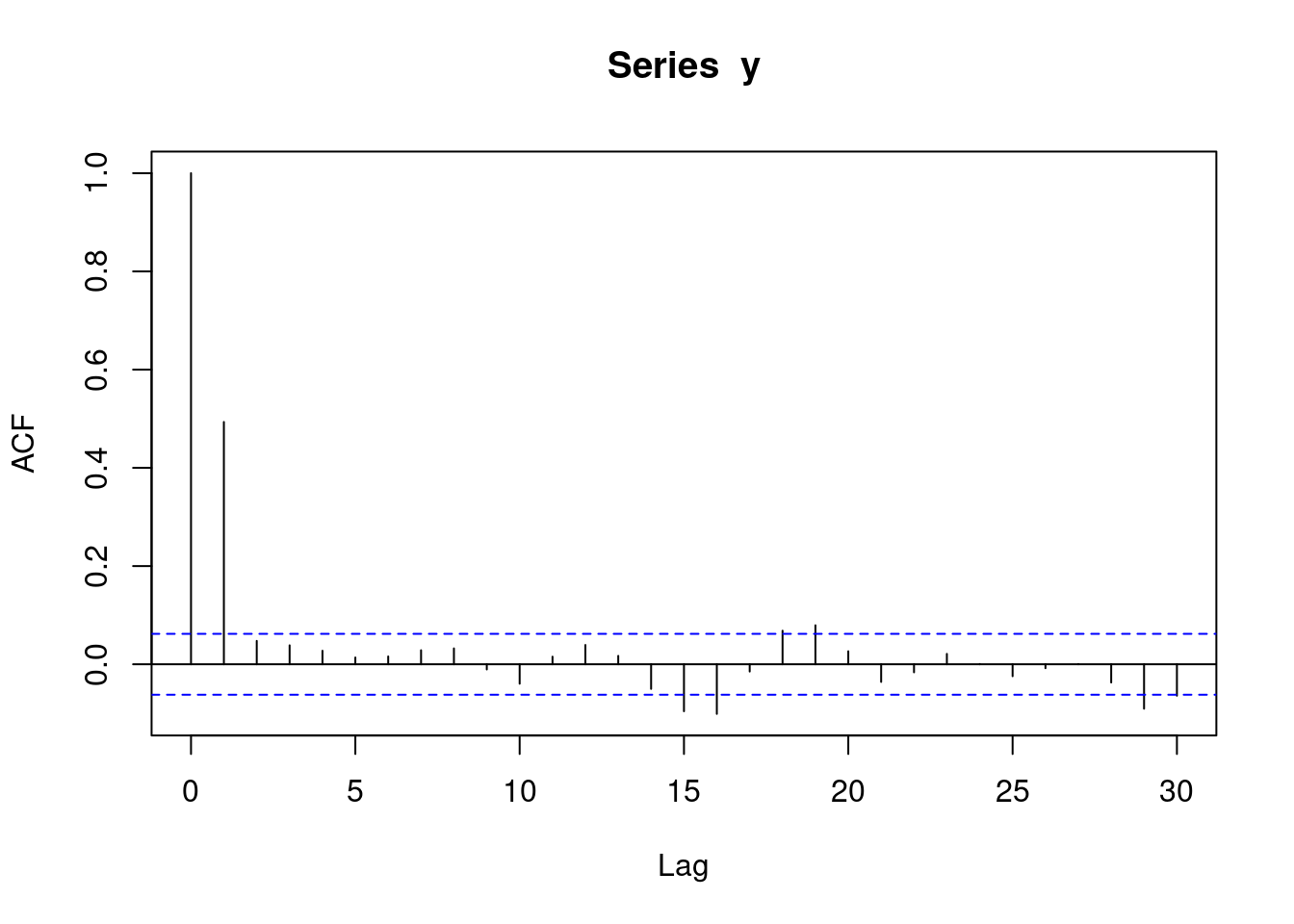

With moving average data, an acf plot contains a number of sharp significant lines, demarking how many subsequent observations have autocorrelation. i.e. if one line is significant, it means that each observation is only correlated with its preceeding observation. If two lines are significant, it means that each observation is correlated with its two preceeding observations. The following plot represents MA(1) data. Note that the acf plot displays lag 0 (which is pointless and can be ignored), while the pacf plot does not.

acf(y)

1.3.7 MA(2) data

y <- round(as.numeric(arima.sim(model=list("ma"=c(0.9,0.6)), rand.gen = rnorm, n=1000)))The following pacf plot represents some sort of MA data.

pacf(y)

The following acf plot represents MA(2) data. This means that each observation is correlated with its two preceeding observations.

acf(y)